AI search is now a real acquisition surface. Prospects ask ChatGPT, Gemini, Claude, and Perplexity “best options for…”, “pricing for…”, “who should I trust for…”, and they often get a single short list of recommendations.

That changes the operational question for marketing and SEO teams:

- Not “Do we rank?”, but “Are we mentioned and recommended accurately?”

- Not “Did traffic go up?”, but “Which prompts, topics, and locations trigger (or exclude) our brand?”

- Not “Can we publish more?”, but “Can we ship AI-ready fixes fast and prove impact?”

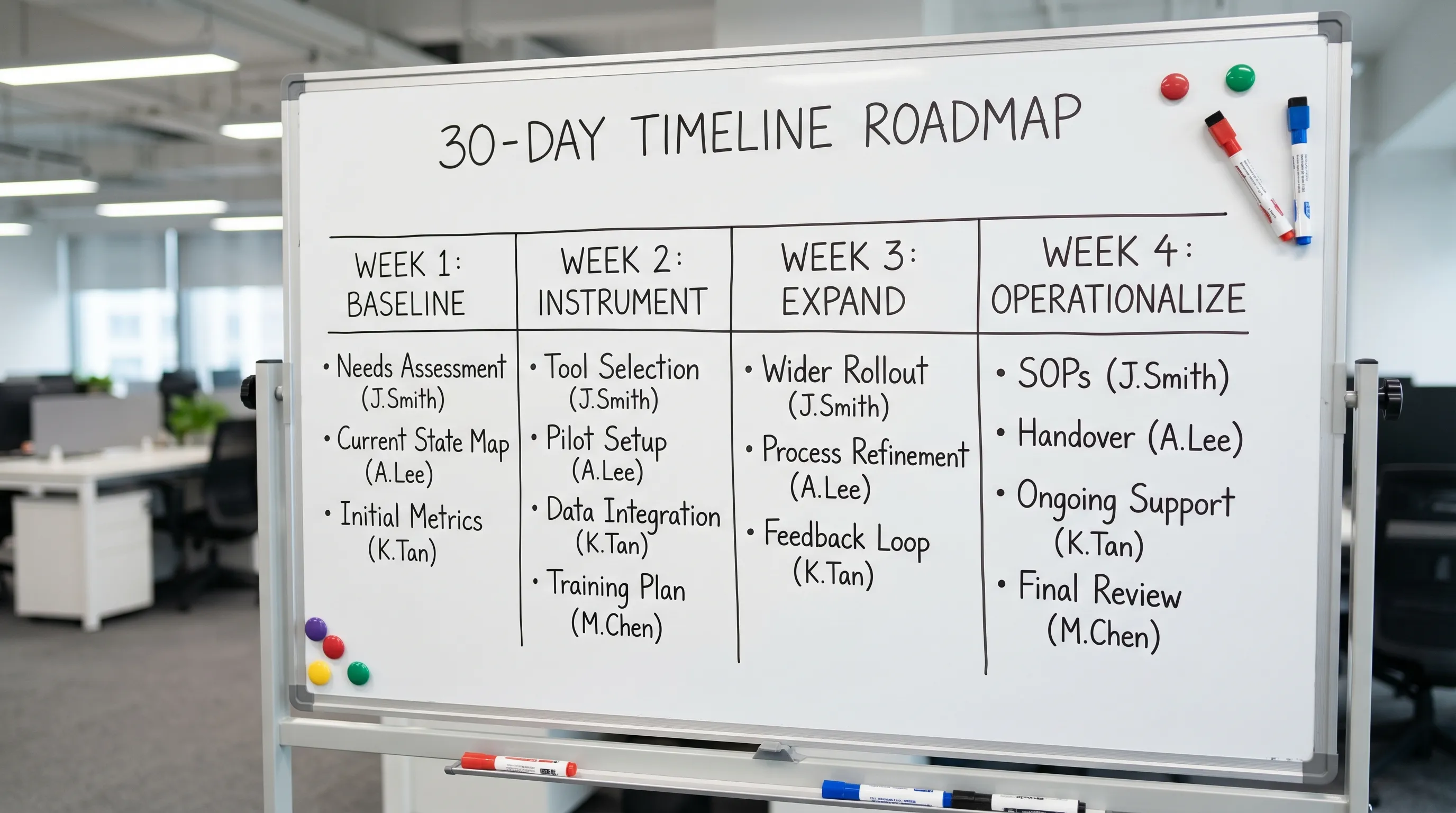

This playbook is built for AI adoption in marketing teams that want a pragmatic, 30-day launch for AI visibility tracking, without turning it into a six-month research project.

What “AI visibility tracking” actually means (and why teams get it wrong)

AI visibility tracking is the discipline of measuring and improving how AI engines:

- Mention your brand (presence)

- Describe you (accuracy)

- Recommend you (ranked inclusion, comparisons)

- Cite sources about you (citations and authority signals)

- Handle variations (locations, products, categories, competitors)

A common mistake is treating this like classic rank tracking. AI outputs are probabilistic, vary by phrasing, and often compress many sources into one answer. So the goal is not “one position”, it’s coverage, consistency, and correctness across the prompts that matter.

CapstonAI is designed for this: it scans and tracks AI mentions across major AI engines, maps prompts to mentions, flags blind spots, and helps teams publish AI-ready FAQs and metadata (with CMS integration for faster fixes).

The 30-day goal: from “we should” to a repeatable operating system

A good 30-day launch produces four outcomes:

- A baseline visibility snapshot (where you appear, where you do not, and how you are portrayed)

- A prioritized fix list tied to prompts and revenue intent

- A cadence for re-scans, alerts, and ownership

- A reporting layer executives can understand (share of voice, coverage, and business impact)

The rest of this article is the step-by-step plan.

Before day 1: define scope so your tracking does not collapse under complexity

AI visibility can sprawl fast. Before you run anything, make three decisions.

1) Choose your “money prompts” first

Money prompts are the highest-intent questions where an AI recommendation can lead to pipeline or sales, such as:

- “Best [category] for [use case]”

- “Top alternatives to [competitor]”

- “What is the best [solution] for [industry] teams?”

- “Which [platform] agency should I use for [outcome]?”

If you only track generic, top-of-funnel prompts, you will improve visibility without improving outcomes.

2) Decide what counts as success (your AI visibility KPIs)

Use a small KPI set you can operationalize weekly.

| KPI | What it measures | Why it matters in 30 days |

|---|---|---|

| Presence rate | How often your brand is mentioned for tracked prompts | Core baseline and fastest win indicator |

| Recommendation rate | How often you are recommended (not just named) | Closer to commercial intent |

| AI share of voice | Your mention share vs competitors across prompt set | Executive-friendly and comparable |

| Prompt coverage | % of key prompt themes where you show up at least once | Reveals blind spots and content gaps |

| Accuracy flags | Incorrect claims, wrong categories, outdated details | Brand safety and conversion friction |

| Time-to-fix | Days from issue found to live fix | Proves your new operating cadence |

If you want to go deeper later, you can add sentiment, citation quality, and location-level coverage. But start here.

3) Assign owners (AI adoption is a process change, not a tool install)

AI visibility tracking touches multiple teams. Assign clear ownership up front.

| Workstream | Primary owner | Supporting roles |

|---|---|---|

| Prompt library and prioritization | Demand Gen or SEO lead | Sales, Customer Success |

| Brand truth and compliance | Product marketing or Legal | Support, Leadership |

| Site fixes and publishing | SEO, Web, Content ops | Engineering, CMS admin |

| Reporting and insights | Marketing analytics | RevOps |

If ownership is fuzzy, the data becomes “interesting” instead of actionable.

Week 1 (Days 1–7): establish your baseline and your prompt map

Week 1 is about learning where you stand and creating a tracking structure you can maintain.

Build a focused prompt library

Aim for 50 to 150 prompts to start. That is usually enough to represent:

- Your core categories (what you sell)

- Your top use cases (why people buy)

- Your top industries (who buys)

- Your top competitors (who you replace)

- Your brand and product names (branded intent)

Keep the language natural, like a real buyer question.

Run your first AI visibility scan

Your first scan should answer:

- Which engines mention you (ChatGPT, Gemini, Claude, Perplexity)

- Which prompt clusters trigger mentions

- Which competitors show up in your place

- What claims are made about you (and whether they are accurate)

If you are new to this category, treat the baseline as discovery, not judgment.

Create a “mention ledger” for accountability

Even if you use a platform to store results, create a simple internal ledger view with:

- Prompt

- Engine

- Mentioned? (Y/N)

- Recommended? (Y/N)

- Competitors shown

- Accuracy notes

- URL(s) cited (if any)

- Owner

- Next action

This is the bridge between insight and execution.

Week 1 deliverables

| Deliverable | Definition of done |

|---|---|

| Prompt library v1 | Grouped by theme and intent, approved by marketing + sales |

| Baseline scan | Stored results, initial presence and share of voice snapshot |

| Issue log | Top accuracy risks and top “missing prompt themes” identified |

Week 2 (Days 8–14): fix the foundation (entity clarity, metadata, and AI-readable answers)

Week 2 is where you earn fast improvement. You are not trying to “game” AI engines. You are reducing ambiguity and increasing extractable, consistent truth.

Fix your entity signals first

AI systems often struggle when your site does not clearly state:

- Who you are (Organization)

- What you offer (products, services, categories)

- Where you operate (locations, service areas)

- How to verify key facts (contact, legal, policies)

Practical actions include:

- Clean up your About page for crisp, factual positioning

- Ensure consistent brand naming (avoid multiple variants for the same product)

- Make pricing and packaging pages unambiguous (even if prices are “contact sales”, explain what drives cost)

Publish AI-ready FAQs on high-intent pages

The goal is not “FAQ content for SEO”. The goal is concise Q&A blocks that AI engines can quote without distortion.

Good AI-ready FAQs:

- Match natural buyer phrasing

- Answer in 2 to 5 sentences

- Use definitions, constraints, and “it depends” boundaries

- Avoid vague superlatives

CapstonAI supports AI-ready FAQ and metadata publishing, which can shorten the gap between insight and fixes.

Validate structured data where it is truly appropriate

Structured data can help disambiguate entities and page intent. Follow Google’s guidelines and only use markup that matches visible content.

- Review Google’s structured data documentation

- Validate using Rich Results Test when relevant

Do not add schema “because it might help”. Add it because it makes your content more machine-interpretable and consistent.

Week 2 deliverables

| Deliverable | Definition of done |

|---|---|

| Foundation fixes shipped | Priority pages updated (entity clarity, positioning, factual blocks) |

| AI-ready FAQs live | FAQs published on top commercial pages and key comparison pages |

| Metadata and technical hygiene | Titles/descriptions improved where needed, schema validated where appropriate |

Week 3 (Days 15–21): expand coverage (competitors, locations, and prompt blind spots)

Week 3 is about turning a baseline into a coverage strategy.

Do competitor and market tracking on purpose

Competitor tracking is not just “who beats us”. It reveals:

- Which sources AI engines cite when recommending others

- Which claims competitors “own” (and whether they are supported)

- Which comparison prompts you are missing entirely

Your output should be a short list of:

- Competitors that dominate your priority prompt clusters

- The pages and sources that appear to influence AI answers

- The content formats that win (comparison pages, guides, definitions, FAQs)

Handle multi-location realities early (if applicable)

If you operate across cities, regions, or countries, AI answers can flatten differences and introduce errors (wrong service area, wrong address, wrong offering).

By Week 3, ensure you have:

- A clear location directory or store locator strategy

- Consistent NAP details where relevant (name, address, phone)

- Location-specific FAQs (availability, shipping, service coverage)

CapstonAI supports multi-location brand management, which is useful when you need visibility tracking that does not treat every market as identical.

Create a “prompt gap backlog” tied to pages, not ideas

For every prompt theme where you are missing, define:

- The page that should win that theme (existing or new)

- The factual points the page must include

- The FAQ questions that should be answered verbatim

- The internal owner and publish date

This keeps AI adoption grounded in execution.

Week 3 deliverables

| Deliverable | Definition of done |

|---|---|

| Competitor influence summary | Top competitors, top cited sources, key prompt clusters mapped |

| Prompt gap backlog | Gaps mapped to specific pages and owners |

| Location coverage plan (optional) | Location pages and FAQs prioritized by revenue impact |

Week 4 (Days 22–30): operationalize tracking (alerts, cadence, and reporting)

Week 4 is where most programs fail, because teams stop at insights. You need a lightweight operating system.

Set a scan cadence you will actually maintain

A practical starting point:

- Weekly scans for your money prompt set

- Bi-weekly scans for secondary prompts

- Monthly scans for long-tail discovery prompts

If your market changes quickly, increase cadence. If your team is small, keep the prompt set tight and consistent.

Add alerting for brand-critical issues

Alerts should be reserved for issues that require action, such as:

- Your brand disappears from a high-intent prompt cluster

- A new competitor dominates your tracked prompts

- An accuracy error appears (wrong claims, outdated details)

- A location is misrepresented

CapstonAI includes critical alert dashboards, which helps teams react while the issue is still fresh.

Create an “AI visibility triage” workflow

Define what happens when an issue is detected.

| Issue type | First response | Fix type | Owner |

|---|---|---|---|

| Missing from key prompts | Check prompt coverage and competitor sources | Content upgrade, new page, PR or citations | SEO + Content |

| Incorrect facts | Verify source of claim | Update site truth source, publish clarifying FAQ | Product marketing |

| Wrong category positioning | Identify ambiguity on site | Rewrite category pages, add definitions and use cases | SEO + PMM |

| Multi-location mismatch | Confirm location pages and listings | Location page fixes, directory consistency | Local SEO |

Report outcomes in a way execs recognize

Your Week 4 report should fit on one page:

- Presence rate and recommendation rate (this month vs baseline)

- AI share of voice vs top 3 competitors

- Top prompt clusters gained or lost

- Top 5 fixes shipped and their measured effect

- Next 30-day priorities

If you want supporting context, you can add a second page with prompt-level detail.

Week 4 deliverables

| Deliverable | Definition of done |

|---|---|

| Tracking cadence | Calendar, prompt sets, and responsibilities finalized |

| Alerts configured | Brand-critical thresholds agreed and monitored |

| Monthly AI visibility report template | One-page executive view plus optional detail tab |

What to expect after 30 days (realistic outcomes)

In 30 days, most teams can achieve:

- Clear visibility baselines across engines

- Identification of the highest-impact prompt gaps

- Noticeable lift in presence and recommendation rates for targeted prompts (especially where the problem was ambiguity, outdated copy, or missing FAQs)

- A repeatable system for ongoing improvement

What you should not promise in 30 days:

- Full control of AI answers

- Perfect accuracy everywhere

- Immediate revenue attribution for every prompt

AI adoption is about building compounding leverage. Month one builds measurement and execution habits. Months two and three are where share of voice typically starts to move more consistently.

Common pitfalls that break AI visibility programs

Treating it as a one-time audit

AI engines, competitor content, and your own site change constantly. If you do not operationalize scans and fixes, you will regress.

Tracking too many prompts too early

More prompts do not mean better insight. Start with a prompt set your team can act on weekly.

Publishing content without a “truth source”

If your official site does not clearly state key facts (positioning, coverage, policies), AI engines will fill gaps from third parties.

No governance for accuracy risks

When an AI claims something incorrect about your brand, you need a response path. Treat this like reputation management meets SEO.

Frequently Asked Questions

What is AI visibility tracking? AI visibility tracking measures how AI engines (ChatGPT, Gemini, Claude, Perplexity) mention, recommend, and describe your brand across a defined set of prompts.

How is AI visibility tracking different from SEO rank tracking? Rank tracking monitors positions in search results. AI visibility tracking monitors inclusion, recommendation, citations, and accuracy inside AI-generated answers, which can vary by prompt phrasing.

How many prompts should we track to start? Most teams start with 50 to 150 prompts covering key categories, use cases, industries, competitor comparisons, and branded queries.

What are the fastest fixes to improve AI mentions? The fastest wins usually come from clarifying entity signals (who you are, what you do), publishing AI-ready FAQs on commercial pages, and removing ambiguity in category and positioning copy.

Can we do this without engineering help? Often yes for the first month, especially if your CMS supports fast publishing. Engineering help becomes useful for deeper schema, site architecture improvements, and automation at scale.

Launch your 30-day AI visibility program with CapstonAI

If you want to skip the guesswork, CapstonAI helps you track how ChatGPT, Gemini, Claude, and Perplexity mention your brand, diagnose blind spots, and ship AI-ready metadata and FAQs faster.

Start with the free AI visibility audit and get a baseline you can act on: CapstonAI