AI search changed what “performance” looks like.

In classic SEO, clicks and sessions were a clean proxy for value. In AI search (ChatGPT, Gemini, Claude, Perplexity, and Google’s AI-driven experiences), users often get answers without ever visiting a website. That does not mean your brand is losing, it means the scoreboard changed.

If you keep measuring only clicks, you will miss the KPIs that actually determine whether AI systems recommend you, cite you, or silently route demand to competitors.

This guide lays out practical AI search visibility KPIs that measure mentions, citations, and recommendation share, with enough structure to turn “AI visibility” into a trackable growth channel.

Why “click KPIs” break in AI search

AI answers reduce the need to click, but they do not reduce the need to choose.

When an assistant recommends “the best project management tool for agencies” or “where to buy running shoes near me,” it is compressing the decision journey into a short list. In many queries:

- The user never opens a blue link.

- The assistant summarizes multiple sources.

- The assistant may cite a brand without linking to it.

- The assistant may link, but the click happens later, on a different device, or via a branded search.

So the core question becomes: Are you present in the answers that shape buying decisions, and are you presented correctly?

If you want a deeper read on how AI-driven layouts can impact CTR, see AI Overviews 2025: what should you monitor and how will they hit CTR?.

The new unit of measurement: prompts, not keywords

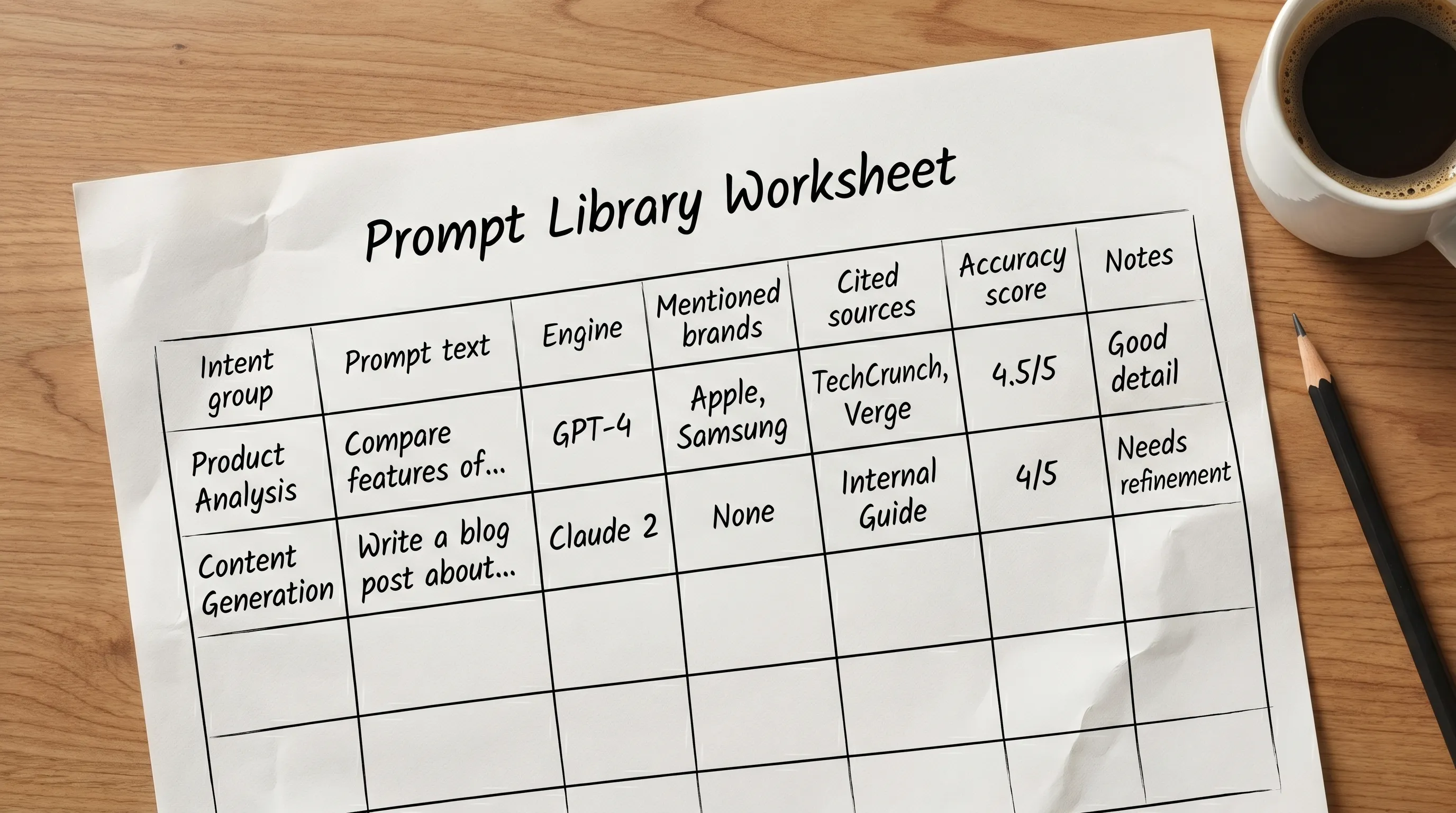

In AI search, a “keyword” is rarely a single phrase. Visibility depends on how people ask, what context they provide, and which follow-up questions appear. That is why the most reliable measurement model starts with a prompt set.

A good prompt set is:

- Intent-based (research, compare, buy, troubleshoot)

- Product-aware (your categories, SKUs, services, locations)

- Competitor-aware (brand vs brand prompts)

- Consistent (same wording used every scan to track trends)

Think of your prompt set as a panel of test queries you run every week, similar to rank tracking, but built for conversational search.

KPI framework: measure presence, quality, stability, and outcomes

Most teams need four KPI families to manage AI search visibility end-to-end:

- Presence: Are you included at all?

- Quality: Are you described accurately and compellingly?

- Stability: Do mentions persist through model updates and competitor moves?

- Outcomes: Is AI visibility creating measurable business impact?

The sections below list the KPIs that matter most, plus what each KPI tells you and what to do when it drops.

Presence KPIs (the “are we in the answer?” metrics)

1) Mention rate

What it measures: How often your brand appears in AI answers for your tracked prompt set.

How to calculate: Mentions / total prompts (per engine, per market, per intent group).

Why it matters: Mention rate is the closest analogue to “ranking visibility” in AI systems.

2) Citation rate (or linked mention rate)

What it measures: How often the assistant cites your site (or controlled properties) as a source.

How to calculate: Answers that include a citation/link to your domain / total prompts.

Why it matters: Citations are a strong signal of trust and can drive downstream traffic even when immediate clicks are low.

3) AI Share of Voice (AI SOV)

What it measures: How your brand’s presence compares to competitors in the same answers.

Common approach: % of tracked answers where you are mentioned, divided by the total mentions across a defined competitor set.

Why it matters: AI SOV turns anecdotal “we show up sometimes” into competitive math you can trend over time.

4) Shortlist presence (Top N inclusion)

What it measures: Whether you appear in the assistant’s “top picks” or recommended set (for example, “top 3” tools).

How to calculate: Prompts where you appear in the top N / total prompts that return a shortlist.

Why it matters: Many commercial prompts resolve into a shortlist. Being mentioned is good, being shortlisted is better.

5) Prompt coverage

What it measures: Coverage across the prompt library, segmented by funnel stage.

How to calculate: % of prompts in each intent group where you appear.

Why it matters: Many brands over-index on “best X” prompts while missing “X vs Y,” “alternatives,” “pricing,” “near me,” or “for beginners” prompts that drive decisions.

For broader context on how this fits into GEO strategy, see GEO vs SEO: which strategy should SMEs prioritize in 2025?.

Quality KPIs (the “are we represented correctly?” metrics)

Presence alone can be risky. AI can mention you with wrong features, outdated pricing, incorrect locations, or the wrong category. Quality KPIs help you defend the brand.

6) Accuracy score

What it measures: Whether AI statements about your brand match reality.

How to operationalize: Create a checklist of critical facts (positioning, core offering, geography served, support hours, key differentiators, compliance claims). Score each answer as accurate/partially accurate/inaccurate.

Why it matters: Inaccurate answers can cause lost deals, support load, and reputational damage.

7) Attribute coverage

What it measures: Whether the assistant includes the buying attributes you care about.

Examples of attributes:

- For e-commerce: shipping speed, returns, warranty, availability, price range

- For SaaS: integrations, onboarding time, security posture, reporting, support

- For local brands: service area, hours, booking method, reviews

Why it matters: If AI consistently omits your strongest differentiator, you can be present and still lose.

8) Sentiment and framing

What it measures: The tone and “role” AI assigns your brand (recommended, neutral, warning, niche option).

Why it matters: AI can implicitly position you as premium, budget, enterprise-only, beginner-friendly, and that framing shapes conversion.

9) Category association (entity positioning)

What it measures: Whether AI places you in the correct category and compares you to the right alternatives.

Why it matters: If AI misclassifies you, you will show up in the wrong prompts and miss the right ones.

Stability KPIs (the “do we keep it?” metrics)

AI outputs can change when models update, when new sources are introduced, or when competitors publish better-structured content.

10) Mention volatility

What it measures: How much your mention rate fluctuates week to week.

How to use it: High volatility suggests you are not “anchored” as a stable entity in the sources the model relies on.

11) Time to first mention (TTFM)

What it measures: How long it takes for new content, fixes, or announcements to start showing up as mentions.

Why it matters: TTFM tells you whether your distribution and indexing pipelines are working for AI ecosystems.

12) Time to fix (TTFix)

What it measures: How long it takes to correct a recurring AI error after you publish clarifications.

Why it matters: This is a governance KPI. It forces a closed-loop process instead of reactive fire drills.

13) Hallucination incidence rate

What it measures: How often the assistant invents incorrect details about your business (features you do not offer, locations you do not serve, unsupported claims).

Why it matters: This is the risk metric executives understand quickly.

Outcome KPIs (the “is it worth it?” metrics)

AI visibility is often indirect, but not unmeasurable. The key is to connect mentions to downstream behaviors.

14) Branded search lift

What it measures: Whether branded queries increase in parallel with improved AI visibility.

How to track: Trend branded impressions and clicks in Search Console, and branded sessions in analytics, then overlay with your AI mention rate.

15) Direct traffic and dark social lift

What it measures: Growth in direct visits and hard-to-attribute referrals that often follow AI-assisted discovery.

Why it matters: Users frequently copy a brand name from an AI answer and navigate directly.

16) Assisted conversions (AI-influenced)

What it measures: Conversions where AI likely influenced consideration, even if last click was not from AI.

Practical proxies:

- Increases in conversion rate on branded landing pages

- Higher lead quality (sales notes, close rates) after visibility gains

- “How did you hear about us?” fields that include “ChatGPT” or “AI search”

The KPI table teams actually use

The fastest way to operationalize this is to standardize a KPI definition sheet that everyone agrees on.

| KPI | What it answers | How you measure it (practical) | What to do if it drops |

|---|---|---|---|

| Mention rate | Are we included? | Mentions / prompts, by engine and intent | Expand prompt coverage, improve entity clarity, strengthen key pages and FAQs |

| Citation rate | Do we get sourced? | Cited answers / prompts | Improve AI-readable structure, add/validate schema, publish supporting pages |

| AI Share of Voice | Are we winning vs competitors? | Your mentions vs total mentions in competitor set | Identify who displaced you, analyze their cited sources, close content gaps |

| Shortlist presence | Are we in the top picks? | Top N inclusions / shortlist prompts | Improve comparison content, clarify differentiators, add proof points |

| Accuracy score | Are we described correctly? | QA scoring against a fact checklist | Fix site metadata and FAQs, publish clarification pages, align entities |

| Attribute coverage | Are our strengths included? | % answers containing target attributes | Add structured FAQs, add spec tables, improve product/service pages |

| Volatility | Is visibility stable? | Week-over-week variance | Stabilize entity signals, reduce conflicting info, monitor updates |

| TTFM | How fast do we appear after updates? | Days from publish to mention | Improve distribution, internal linking, indexing, content syndication |

| TTFix | How fast do errors go away? | Days from fix to corrected mentions | Create a remediation playbook, add monitoring and alerts |

| Assisted conversions | Is AI visibility driving business? | Branded lift, direct lift, CRM notes | Tighten attribution, align sales enablement, double down on winning intents |

How to build an AI visibility KPI baseline in 30 days

You do not need a perfect system to start, but you do need consistency.

Step 1: Build a prompt library that reflects revenue intent

Create prompts across these groups:

- Category discovery: “best [category] for [use case]”

- Comparisons: “[your brand] vs [competitor]” and “alternatives to [competitor]”

- Local intent (if relevant): “near me,” “in [city],” “open now”

- Trust checks: “is [brand] legit,” “reviews,” “pricing,” “refund policy”

Keep the library small enough to run weekly, large enough to be representative. Many teams start with 30 to 80 prompts and expand over time.

Step 2: Segment KPIs by engine, market, and intent

AI outputs vary widely by platform and context. Your KPI dashboard should always be filterable by:

- Engine (ChatGPT, Gemini, Claude, Perplexity)

- Country or city (especially for multi-location brands)

- Funnel stage (research vs purchase)

Step 3: Define what counts as a “mention”

Decide upfront:

- Is a mention only the exact brand name, or also product names?

- Do misspellings count?

- Do subsidiary brands count?

- Do marketplace listings count as “you,” or only your domain?

This removes ambiguity and makes trends meaningful.

Step 4: Add QA, not just counts

At minimum, QA the commercial prompts (the ones closest to conversion). A weekly quality check on 10 to 20 answers can prevent expensive misinformation.

Step 5: Close the loop with fixes

KPIs only matter if they trigger action. When you find gaps, typical fixes include:

- Publishing AI-ready FAQs that match real prompts

- Improving metadata clarity (titles, descriptions, on-page definitions)

- Adding structured data (see Schema.org and Google’s structured data documentation)

- Creating comparison and “alternatives” pages that reduce competitor capture

- Standardizing entity details (name, address, phone, URLs) across the web

If you want a stronger operational model for reporting, CapstonAI also breaks down how GEO dashboards differ from classic reports in Which 6 GEO dashboards vs SEO reports should you use in 2025?.

Common measurement pitfalls (and how to avoid them)

Pitfall: treating one-off tests as truth. AI answers can vary by time, location, user context, and model updates. Run repeated scans and trend lines.

Pitfall: only tracking “best X” prompts. Many buying journeys happen in comparisons, implementation questions, and local queries.

Pitfall: counting mentions without checking accuracy. A wrong mention can be worse than no mention.

Pitfall: no competitor set. “We’re mentioned” is not the goal. “We’re mentioned more than the brands that steal our deals” is the goal.

Pitfall: no remediation SLA. If you cannot answer “how fast can we fix AI misinformation,” you do not yet control the channel.

Frequently Asked Questions

What is AI search visibility? AI search visibility is how often, where, and how accurately AI engines (like ChatGPT, Gemini, Claude, and Perplexity) mention and recommend your brand in generated answers.

What KPI should I start with for AI search visibility? Start with mention rate and citation rate, then add AI Share of Voice against a defined competitor set. Add accuracy scoring as soon as you see recurring brand misinformation.

How do you measure AI mentions at scale? Most teams standardize a prompt library, run repeat scans by engine and market, store results in a mentions ledger, and trend KPIs weekly. Dedicated AI visibility tools can automate collection, mapping, and alerts.

Why are clicks less reliable in AI search? AI answers often satisfy the query without a click, and users may convert later via branded search or direct navigation. Mentions and citations capture influence earlier in the journey.

How do I link AI visibility to revenue? Use outcome proxies like branded search lift, direct traffic lift, assisted conversion patterns, and CRM self-reported attribution (“found you on ChatGPT”). Trend these alongside mention rate and AI Share of Voice.

Turn AI visibility into a KPI you can manage

If your team is still reporting success as “traffic from Google,” you are likely under-measuring what AI engines are already doing to your funnel.

CapstonAI helps brands and agencies track how ChatGPT, Gemini, Claude, and Perplexity mention your business, diagnose blind spots, publish AI-ready FAQs and metadata, monitor competitors, and set up alerts when visibility shifts.

Start with a free AI visibility audit to benchmark your mention rate, citation rate, and AI Share of Voice, then prioritize the fixes that move those KPIs in the right direction.