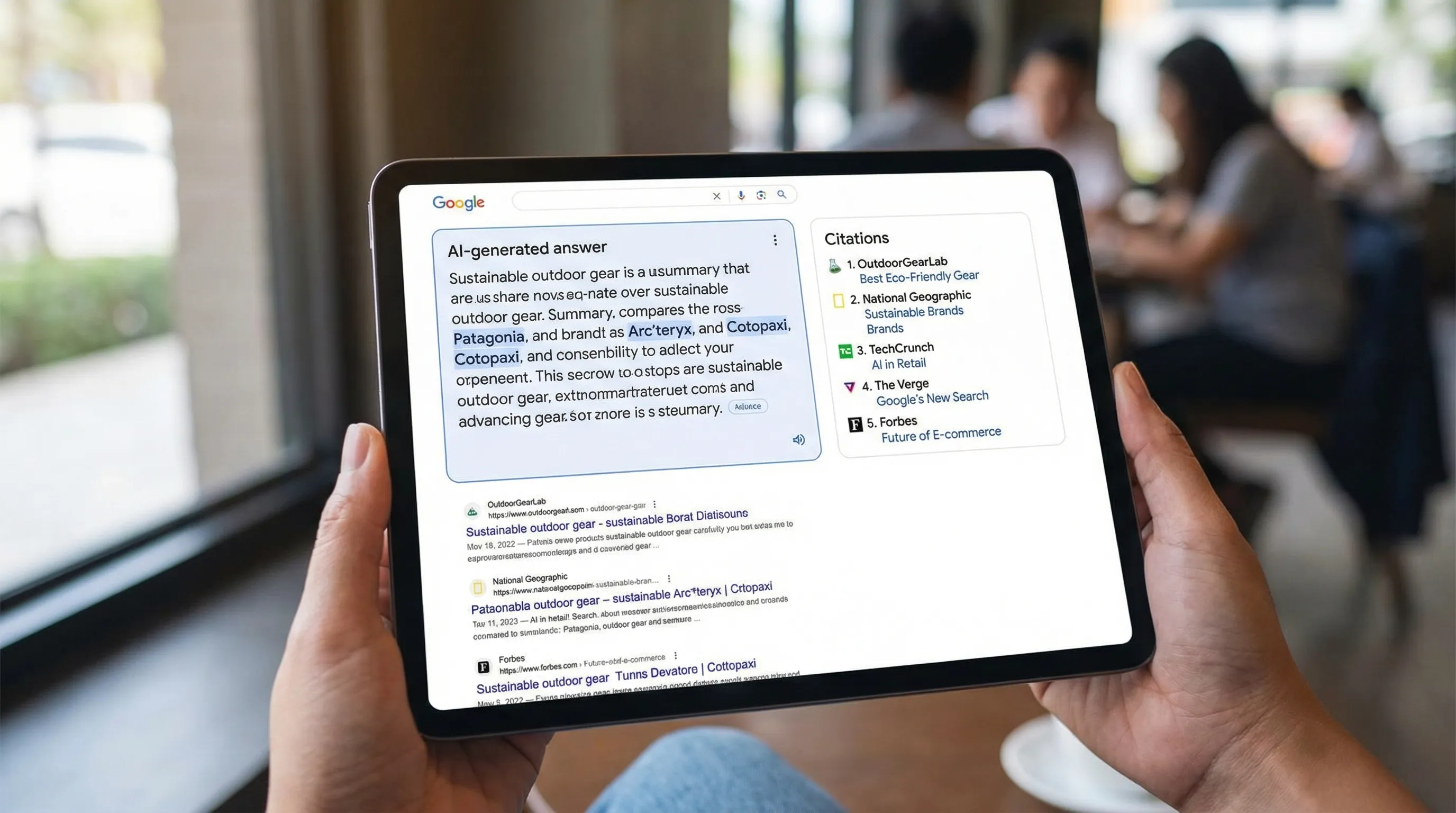

Brand visibility in Google is no longer just “Where do we rank?” Increasingly, the question is “Does Google’s AI mention us, and does it cite us?”

In Google AI Search (especially AI Overviews and other generative answer surfaces), being referenced can influence consideration even when users never click through. That makes brand mentions and citations a new kind of demand signal and a new kind of risk. If your competitor is consistently cited in AI answers for your highest-intent queries, you can lose mindshare even if your classic rankings look fine.

This guide breaks down what to track, how to track it reliably, and how to turn AI citations into a measurable visibility program.

What counts as a “brand mention” vs a “citation” in Google AI Search

In practice, teams mix these terms. For tracking and reporting, it helps to define them clearly:

- Brand mention: Your brand (or product, location, executive, proprietary term) appears in the AI-generated answer text.

- Citation: Google shows a source link tied to the AI answer (often in a citation carousel or list), typically pointing to a specific URL. A citation may be from your site, a partner, a review site, a marketplace listing, a news article, or a knowledge source.

A mention without a citation still matters for awareness, but citations are usually the defensible “credit” that correlates with traffic potential, authority, and repeat inclusion.

Here’s a useful taxonomy you can adopt internally.

| Visibility event | What it looks like | Why it matters | What to capture in tracking |

|---|---|---|---|

| Cited mention (best case) | Your brand is named and your site is linked as a source | You get credit, potential clicks, and reinforcement of authority | Query, date, location/device, mention text, cited URL(s), cited domain, citation order |

| Uncited mention | Your brand appears but the citations point elsewhere | Brand awareness without traffic credit, can be fragile | Mention text, which sources were cited instead, what claim you were associated with |

| Cited but not mentioned | Your page is cited but brand name is absent in the answer text | Can still drive clicks, also signals “source authority” | Cited URL, how it was used (definition, comparison, stats, how-to) |

| Competitor cited | Competitor is cited/mentioned for a query you care about | Lost AI shelf space in the evaluation stage | Competitor entity, cited URLs, source types (reviews, directories, media) |

| Wrong-entity mention | Google confuses you with a similar name or outdated brand | Reputation and conversion risk | The incorrect mention, the query context, the sources Google used |

Why tracking AI mentions is harder than tracking rankings

Classic SEO tracking assumes relatively stable “10 blue links” behavior. Google AI Search does not.

Key reasons AI mention tracking needs its own system:

- High volatility: AI answers can change frequently as Google refreshes sources, rewrites the query internally, or adjusts the answer.

- Context sensitivity: Location, device type, language, and query phrasing can materially change both the answer and the citation set.

- Different inclusion logic: A page can rank well organically but never get cited, and a page can be cited without ranking top 10.

- Multi-source answers: You are competing for a small number of citations, not a page of results.

- Attribution gaps: In tools like GA4 and Search Console, it can be difficult (and sometimes impossible) to cleanly separate AI Overview-driven clicks from standard organic clicks.

So the goal is not “perfect measurement.” The goal is repeatable sampling + consistent baselines + fast detection of changes.

A practical workflow to track brand mentions and citations in Google AI Search

Think of this as a lightweight observability pipeline: define what you will monitor, capture snapshots consistently, extract the mentions/citations, then score and alert.

1) Define the entities you want Google to understand

Start by listing the “things” that should reliably resolve to your brand in AI answers.

Typical entity groups:

- Brand name and common misspellings

- Product and category names

- Key location entities (cities, stores, service areas)

- Executive/spokesperson names (only if relevant to your SEO strategy)

- Proprietary methods, frameworks, or named features customers search for

This matters because a large share of AI visibility problems are entity resolution problems (Google is not sure which “X” you are).

If you operate multiple locations, treat each location as a trackable entity group and keep it tied to the correct landing page and citations.

2) Build a query set that matches real evaluation behavior

AI answers tend to show up most often on exploratory and comparative queries, but that varies by industry. Build a query set that reflects how prospects actually evaluate.

A balanced query library usually includes:

- Category definitions: “what is [category]”, “how does [category] work”

- Commercial comparison: “best [category] for [use case]”, “[brand] vs [competitor]”

- Alternatives and replacements: “alternatives to [competitor]”, “replace [legacy solution]”

- Proof and trust: “[brand] reviews”, “is [brand] legit”, “is [brand] safe”

- Local intent (if applicable): “best [service] near me”, “[brand] [city]”, “where to buy [product] in [city]”

Operational tip: keep this query set stable for baseline tracking, and maintain a separate “experimental” set for new ideas.

3) Standardize how you capture AI answer snapshots

You need consistent conditions. Otherwise, you will argue about data instead of acting on it.

At minimum, log:

- Date/time

- Country/region and (if relevant) city

- Device type (desktop/mobile)

- Logged-in vs logged-out state

- Browser/language

Many teams start with manual spot checks and screenshots, then graduate to automation. If you automate, make sure your approach respects applicable platform terms and internal security policies.

4) Extract the fields that make analysis possible (not just screenshots)

Whether you use a spreadsheet, a database, or a dedicated platform, capture structured fields so you can compute trends.

Recommended fields:

- Query

- Intent class (definition, comparison, local, troubleshooting, purchase)

- Your mention present (yes/no)

- Your citation present (yes/no)

- Cited URL(s) from your domain

- Competitor mentions and competitor citations

- Citation source types (brand site, marketplace, media, review site, directory, UGC/forum)

- Sentiment or framing (neutral, positive, negative) based on the surrounding phrasing

This turns “we saw something once” into a measurable dataset.

5) Track a small set of KPIs that you can defend

You do not need 25 metrics. You need a few metrics that survive leadership scrutiny and map to action.

| KPI | Definition | Why it’s useful |

|---|---|---|

| AI mention rate | % of tracked queries where your brand is mentioned in the AI answer | Measures top-of-funnel presence and brand recall potential |

| AI citation rate | % of tracked queries where your domain is cited | Measures “credit” and defensible visibility |

| Citation share of voice | Your citations / total citations across tracked queries | Normalizes visibility in multi-citation answers |

| Competitor citation displacement | Queries where a competitor is cited and you are not | Identifies where you are losing “AI shelf space” |

| Uncited mention rate | Mentions without citations to your domain | Often signals entity recognition without source preference |

| Citation quality | Share of citations landing on the correct page type (product, location, category, help article) | Prevents “we got cited, but it’s the wrong page” problems |

If you already run classic SEO dashboards, treat these as an additional layer, similar to how teams added local pack tracking years ago.

6) Set alerts for reputation and revenue-critical scenarios

AI visibility changes can happen quickly. Alerts keep you from discovering problems after a quarter of pipeline has softened.

High-signal alert conditions:

- Drop in citation rate for your top-intent query set

- Spike in competitor citations on “best”, “vs”, and “alternatives” queries

- Negative framing attached to your brand name

- Wrong entity association (confusion with another brand)

- Loss of citations for a priority location or product line

7) Close the loop: translate tracking into fixes Google can actually use

Tracking is only valuable if it changes what you publish and what Google can confidently cite.

Common “fix categories” that correlate with better citation eligibility:

- AI-ready metadata: clearer titles/descriptions and page summaries that match the query intent

- Structured data and entity clarity: align Organization/LocalBusiness/Product/Article schema with what the page is about (see Google’s structured data documentation)

- Sourceworthiness: publish pages that are easy to cite (clear definitions, specs, comparisons, policies, pricing logic where appropriate, and up-to-date details)

- Consistency across the web: ensure your brand facts match across your site, profiles, and key third-party listings

- Trust signals: strengthen authorship, references, and transparency aligned with quality guidance (see Google’s Search Quality Rater Guidelines and related documentation)

Diagnosing why Google AI Search is not citing you (a quick root-cause map)

When a competitor is cited and you are not, teams often jump straight to “we need more content.” Sometimes that’s right, but not always.

Use this mapping to narrow the problem before you invest.

Scenario A: You are mentioned, but not cited

This often means Google recognizes the brand entity, but it prefers other sources for substantiation.

Typical causes:

- Your best page is not easily extractable (buried answers, heavy templates, unclear headings)

- Another site is considered the canonical explainer (review site, media, Wikipedia-style summary)

- Your page exists but is not trusted for the specific claim being made (for example, “best for X,” “cheapest,” “most reliable”)

Actions to test:

- Create or improve a page that answers the exact query class (comparison, alternatives, “best for”) with clear structure

- Add supporting evidence, references, and clear definitions

- Ensure the page targets the correct entity (product vs company vs location) and is internally linked from relevant hubs

Scenario B: You are cited, but the wrong page is cited

This is common for multi-location brands and ecommerce catalogs.

Typical causes:

- Weak page-to-intent mapping (Google picks a blog post instead of a product page)

- Duplicate or near-duplicate pages competing

- Missing structured data that clarifies page type

Actions to test:

- Strengthen internal linking to the preferred landing page

- Consolidate duplicates and enforce canonicalization where appropriate

- Validate structured data and on-page labeling (what the page is, who it is for, where it applies)

Scenario C: A competitor dominates citations across your money queries

This can be a distribution problem, not just an on-site problem.

Typical causes:

- Competitor is present on third-party sources Google cites frequently (top review sites, marketplaces, authoritative directories)

- Competitor has more consistent entity coverage for variations (locations, SKUs, service areas)

- Your brand is missing “explainers” that match the way users ask questions

Actions to test:

- Identify the source types Google is using (reviews, media, forums, directories) and prioritize presence where it matters

- Build a prompt and query map to see which intents you do not cover

- Publish citation-friendly pages designed for those intents, not just for keywords

Scenario D: Google confuses your brand with another entity

This is a high-risk case because it can create reputation damage.

Typical causes:

- Similar brand names

- Rebrands and legacy domains not handled cleanly

- Inconsistent business details across profiles

Actions to test:

- Audit consistency of brand facts across your site and key profiles

- Strengthen entity signals (about page clarity, schema, consistent naming)

- Track the problem queries and monitor whether the wrong-entity issue persists

How to report AI citations to leadership without overpromising

Executives want to know two things: “Are we winning?” and “What do we do next?”

A strong monthly AI visibility report usually includes:

- A single headline metric: citation rate or citation share of voice for priority queries

- Top gains and losses (queries where you entered or dropped from citations)

- Competitor displacement summary (who is taking share, and where)

- A short actions list tied to expected outcomes (publish, fix, integrate, defend)

- A risk log (negative mentions, wrong entity, lost location visibility)

Avoid presenting AI visibility as a direct replacement for classic SEO reporting. Instead, position it as the measurement layer for “answer-driven search”.

Tooling: DIY tracking vs an AI visibility platform

You can start manually with a spreadsheet for 20 to 50 queries, but most teams hit scaling problems quickly:

- It becomes hard to keep conditions consistent (location, device, personalization)

- Screenshots do not turn into analyzable data

- Volatility creates noise without baselines and alerting

- Multi-location brands need location-specific visibility, not just “the brand”

This is where an AI visibility platform can help operationalize the workflow.

CapstonAI is built for this use case: tracking how AI engines mention and recommend your business, mapping prompts and mentions, monitoring competitors and share of voice, and helping teams publish AI-ready FAQs and metadata with CMS integration. If you want a fast baseline before building an internal process, you can start with a free AI visibility audit at CapstonAI.

The bottom line

In Google AI Search, citations are becoming a new battleground for trust and consideration. The teams that win will be the ones who treat AI answers like a measurable surface:

- Define what counts as a mention and a citation

- Track a stable query set under consistent conditions

- Measure a small number of defensible KPIs

- Alert on meaningful changes

- Ship fixes that improve entity clarity and cite-worthiness

If you want to see how your brand appears today, and where competitors are taking your citation share, you can run a free audit with CapstonAI and turn AI visibility into a repeatable growth channel.