If your prospects ask Claude questions like “best [product category] for [use case]” or “alternatives to [competitor],” you want your brand to appear as a confident, accurate recommendation. The challenge is that Claude AI mentions are not something you can “rank” for in the classic way. They are an outcome of how clearly the web (and your own content) describes your brand as an entity, how easy your pages are to extract answers from, and how consistently you show up in credible places Claude can learn from (or be provided during retrieval).

This guide gives you a practical, SEO-friendly playbook to increase the odds that Claude mentions your brand for the prompts that matter.

What “Claude AI mentions” actually mean (and why they are inconsistent)

A Claude mention is any time Claude names your company, product, or location in response to a user query. In practice, mentions fall into a few patterns:

- Direct recommendation lists (for example, “Top 5 tools for…”) where you want to appear in the shortlist.

- Comparisons (for example, “X vs Y vs Z”) where you want accurate positioning and differentiators.

- “Where to buy” and “near me” intent where location accuracy matters.

- Explainer answers (for example, “What is the best way to…”) where being cited as a source or “recommended provider” is the goal.

Why results vary: depending on the Claude experience a user is in, answers can be influenced by a mix of model knowledge, provided context, and retrieval sources (like web results or connected knowledge bases). That means your visibility is partly a brand authority problem and partly a content packaging problem.

Step 1: Map the prompts that should trigger your brand

Most teams track keywords. For Claude, you need to track prompt intent clusters.

Start with 20 to 50 prompts that reflect how buyers actually ask questions:

- Category prompts: “best [category] for [industry]”

- Problem prompts: “how to solve [pain] in [context]”

- Comparison prompts: “[competitor] alternatives” and “[competitor] vs [your brand]”

- Trust prompts: “is [brand] legit,” “pricing,” “reviews,” “support,” “security”

- Local prompts (if relevant): “best [service] in [city]”

A useful rule: if a prompt can influence revenue in the next quarter, it belongs in your Claude prompt set.

Build a simple “prompt to page” mapping

For each prompt, define the page (or asset) that should be the best answer source:

- Product or solution page

- Pricing page

- Comparison page

- Industry landing page

- Help center article

- Location page (for multi-location brands)

This prevents a common failure mode where Claude tries to answer, but your site does not have a single, extractable page that clearly addresses the question.

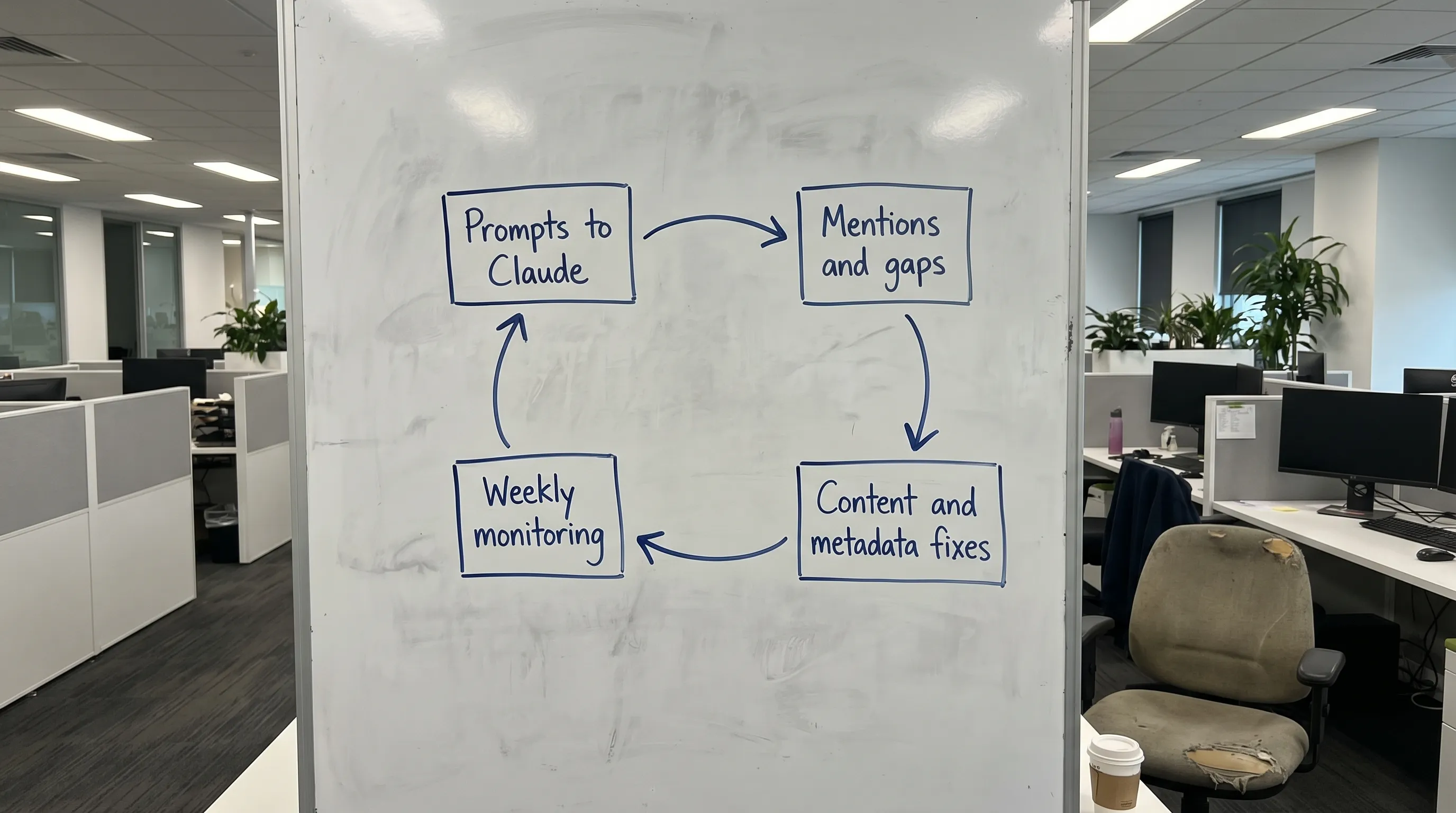

Step 2: Run a baseline audit of Claude’s mentions (and log them like a dataset)

Do a baseline run of your prompt set and capture, for each prompt:

- Whether your brand is mentioned

- Where it appears in a list (position matters)

- Whether the description is accurate

- Whether competitors are mentioned instead

- Whether locations, pricing, or policies are wrong or outdated

Treat this as a repeatable measurement workflow, not a one-time check.

A practical audit table you can reuse

| Prompt cluster | Example prompt | Desired outcome | Common failure | Best fix to test |

|---|---|---|---|---|

| Category discovery | “Best payroll software for startups” | Listed in top options with correct positioning | You are missing, or described vaguely | Create a category-fit page + FAQ blocks + comparison snippets |

| Competitor alternatives | “[Competitor] alternatives” | Included with clear differentiator | Claude only lists large incumbents | Publish an “Alternatives” page with evidence, use cases, and constraints |

| Local intent | “Best dentist in [city]” | Correct location pages mentioned | Wrong city, mixed branches, outdated hours | Strengthen location entity data, consistent NAP, LocalBusiness schema |

| Trust validation | “Is [brand] legit?” | Accurate trust signals summarized | Claude hedges or signals uncertainty | Add policy pages, reviews coverage, third-party mentions, clear About page |

| Pricing intent | “How much does [brand] cost?” | Correct pricing model described | Outdated prices or missing context | Improve pricing page structure, add FAQ entries, timestamp updates |

Step 3: Fix the core reason Claude skips you: weak entity clarity

Many “no mention” problems are not content volume problems. They are entity definition problems.

Claude needs to confidently connect:

- Your brand name and variations

- Your product category

- Your target use cases

- Your geography (if relevant)

- Your official website and profiles

High-impact entity improvements

Focus on these foundational assets:

- A strong About page: clearly state what you do, who you serve, where you operate, and what makes you different.

- Consistent naming across the web: the same brand name, product names, and descriptions on your site and key profiles.

- Structured data for your organization and locations: implement relevant schema types so machines do not have to guess.

If you want a canonical reference for vocabulary, use Schema.org to validate which types match your business (commonly Organization, Product, SoftwareApplication, LocalBusiness, and FAQPage). For implementation guidance and eligibility rules, cross-check Google’s structured data documentation. (Even if the goal is Claude, clean structured data improves machine readability across systems.)

Step 4: Make your pages “answer extractable” (AEO basics applied to Claude)

Even when Claude has access to good sources, it tends to rely on content that is easy to summarize without introducing errors.

That means your pages should be:

- Specific (clear definitions, constraints, who it is for)

- Structured (tight headings, scannable sections)

- Evidence-backed (examples, benchmarks, policies, citations where appropriate)

- Up to date (pricing, availability, locations, integration lists)

Page patterns that increase mention probability

You do not need dozens of blog posts. You need the right page types:

- “Best for” pages: “Best [your category] for [industry/use case]” with honest fit and non-fit.

- Alternatives and comparisons: transparent comparisons reduce hallucinated positioning.

- FAQ hubs: question-style headings that match prompt language.

- Location pages at scale: one page per branch/service area, with consistent local details.

If you are building toward a broader Answer Engine strategy, your team may also want to align with your existing AEO approach (CapstonAI’s overview here: Answer Engine Optimization (AEO)).

Step 5: Repair “AI-ready metadata” (it helps more than most teams think)

Metadata does not just influence clicks in Google. It also improves how pages are interpreted, disambiguated, and classified.

Prioritize:

- Title tags that clearly state category + brand + differentiator

- Meta descriptions that summarize who it is for and the key promise

- Schema that matches the page purpose (Product, FAQ, LocalBusiness)

- Descriptive image alt text on key pages (especially for products and locations)

If you want a quick win framework, see: 7 Quick Wins: Metadata SEO Rankings.

Step 6: Increase the “trusted footprint” Claude can learn from

You can improve on-site clarity and still lose mentions if competitors dominate trusted third-party references.

Aim for a credible footprint in places that often shape brand understanding:

- Industry directories and marketplaces relevant to your category

- High-quality reviews and comparison sites (where appropriate)

- Partner pages (integrations, resellers, associations)

- Expert commentary, podcasts, and reputable press

The goal is not backlinks for PageRank alone. The goal is consistent, third-party reinforcement of what your entity is and when it should be recommended.

Step 7: Monitor “share of voice” in Claude, not just presence

Presence is binary. Growth is competitive.

Track:

- Presence rate: how many prompts mention you at all

- List placement: where you appear when options are ranked

- Competitor displacement: which brands show up instead of you

- Description accuracy: whether Claude explains you correctly

- Local accuracy (multi-location): correct branch, hours, service area

Here is a measurement set you can align across teams.

| KPI | What it tells you | How to use it |

|---|---|---|

| Prompt presence rate | Coverage across your demand map | Prioritize prompt clusters with revenue impact |

| Mention rank (in lists) | Competitive strength | Identify which competitor you need to outrank in “shortlists” |

| Accuracy score | Risk control (wrong claims, wrong positioning) | Trigger content fixes, policy pages, comparison clarifications |

| AI share of voice | Market visibility relative to competitors | Track momentum after releases, PR, and content launches |

| Location correctness rate | Real-world business accuracy | Fix NAP, schema, and location pages to reduce misrouting |

How CapstonAI helps (without turning this into guesswork)

Doing the above manually is possible, but it is hard to scale and even harder to keep consistent as models and markets shift.

CapstonAI is built for operational AI visibility work, including:

- AI visibility scans to measure how Claude (and other engines) mention your brand

- Prompt and mention mapping so you can connect demand prompts to the pages that should win

- Competitor and market tracking to monitor who is taking share of voice

- Automated content recommendations that translate gaps into actionable fixes

- CMS integration for instant fixes plus AI-ready FAQ and metadata publishing

- Multi-location brand management, alerts, and share of voice analytics for teams that need consistency at scale

Frequently Asked Questions

Why doesn’t Claude mention my brand even when I rank on Google? Claude mentions depend on more than rankings. If your entity is unclear, your pages are not easily summarizable, or competitors have stronger trusted coverage, Claude may skip you.

Can I “optimize for Claude” the same way I optimize for SEO? The overlap is real (structured data, clear page structure, authoritative content), but the workflow is closer to AEO and GEO: you track prompts, measure mentions, and iterate on extractability and entity clarity.

Do I need schema markup to get Claude AI mentions? Not strictly, but schema helps machines interpret your pages correctly and reduces ambiguity. It is a high-leverage baseline improvement.

How often should I monitor Claude mentions? Weekly for core prompts is a practical cadence. Increase frequency during launches, PR pushes, seasonal demand spikes, or major site changes.

What’s the fastest way to improve Claude AI mentions? Fix the pages that should answer your top prompts first (pricing, comparisons, category-fit pages, FAQs), then strengthen entity signals (Organization and location data) and third-party reinforcement.

Get a free AI visibility audit for Claude (and beyond)

If you want to stop guessing and start treating Claude AI mentions as a measurable growth channel, run a baseline audit and turn it into an improvement backlog.

CapstonAI offers a free AI visibility audit to help you understand how Claude (plus ChatGPT, Gemini, and Perplexity) currently describes and recommends your brand, where the gaps are, and what to fix first.

Explore CapstonAI and request your audit at capston.ai.