AI search is no longer just “SEO, but with new SERP features.” When customers ask ChatGPT, Gemini, Claude, or Perplexity for recommendations, they often get a single synthesized answer, and that answer may or may not include your brand, your locations, your product lines, or the right positioning. That’s why teams are now shopping for an AI platform that can measure and improve AI visibility with the same rigor they bring to rankings, analytics, and conversion.

This buying guide breaks down the must-have features to look for, the pitfalls that create false confidence, and the questions you should ask before signing a contract.

What an “AI visibility platform” should actually do

A true AI visibility platform sits between classic SEO tooling and brand monitoring. It answers four operational questions:

- Presence: Do AI engines mention or recommend you for the prompts that matter?

- Quality: Are you described accurately (offer, pricing approach, locations, constraints, category fit)?

- Drivers: What sources, pages, entities, and third-party references appear to influence those mentions?

- Action: What should your team change (content, metadata, FAQs, structured data, citations), and how do you track impact over time?

If the platform can’t reliably connect measurement to fixes (and fixes to outcomes), it becomes an interesting dashboard, not a growth channel.

Buying mindset: treat this like analytics infrastructure, not a content tool

Many “AI SEO” tools are essentially content assistants with a GEO/AEO layer added on top. That can help with drafting, but it does not solve the hard problems of AI visibility:

- Coverage across multiple AI engines and prompt types

- Consistent tracking (so you can detect real movement, not randomness)

- Competitive baselines (your visibility is relative)

- Governance (multi-team edits, brand safety, audit trails)

- Activation (publishing metadata and FAQs into your CMS without a manual queue)

If you’re building a predictable AI visibility program, evaluate platforms the way you’d evaluate a BI or attribution tool.

Must-have features for AI visibility (with buying criteria)

Below are the features that matter most for brands, retailers, and agencies, plus what “good” looks like when you’re evaluating vendors.

1) Multi-engine coverage (and clear scope boundaries)

Why it matters: AI visibility is fragmented. A brand can be strong in Google AI Overviews and weak in Perplexity, or the opposite.

What to look for:

- Coverage for the engines your customers actually use (at minimum, the major assistants and AI answer engines)

- Clear definitions of what is being measured (mentions, citations, recommendations, category inclusion)

- Transparent limitations, for example, regional availability, personalization, or logged-in variability

Vendor questions to ask:

- “Which engines do you support today, and how do you handle engine changes?”

- “Do you measure both citations (linked sources) and unlinked brand mentions?”

Related reading: What is Generative Engine Optimization (GEO)?

2) Prompt and intent coverage mapping

Why it matters: Most teams track a few vanity prompts (for example, “best X tools”), but miss the intent layers that drive revenue, like comparisons, local availability, integrations, pricing expectations, and “best for” use cases.

What to look for:

- A way to map prompts by funnel stage (awareness, evaluation, purchase, support)

- Clustering by intent and entity, not just keywords

- The ability to see where you are absent (true blind spots)

Vendor questions to ask:

- “Can I upload my own prompt set, and can the platform expand it intelligently?”

- “Do you track prompts by market, product line, or location?”

3) Mention ledger and recommendation context (not just “you were mentioned”)

Why it matters: Being mentioned is not always positive. You need to know how you’re framed.

What to look for:

- Captured answer text with timestamps

- Positioning tags (recommended, compared, excluded, warned against)

- Entity associations (what categories and alternatives you’re grouped with)

Vendor questions to ask:

- “Can I see the exact output that triggered the mention metric?”

- “Do you track changes in how the model describes our differentiators?”

4) Competitive and market tracking (share of voice for AI)

Why it matters: AI answers are often short. If your competitor is the default recommendation, you can be invisible even if your own site is strong.

What to look for:

- AI share of voice across a defined competitor set

- Category-level baselines (what “good” looks like in your niche)

- Trend views that separate volatility from sustained movement

Vendor questions to ask:

- “How do you define AI share of voice, and can we customize the competitor set?”

- “Can I segment by prompt cluster, product category, or region?”

Related reading: Which GEO dashboards vs SEO reports should you use in 2025?

5) Source attribution and influence diagnostics

Why it matters: To improve visibility, you need hypotheses you can test. That requires understanding what sources appear in answers, and where your brand is (or isn’t) represented.

What to look for:

- Source and citation tracking (when engines provide citations)

- Page-level attribution (which URLs are being used)

- Gaps across entity coverage (missing “about,” “pricing,” “locations,” “comparison,” and “FAQ” surfaces)

Vendor questions to ask:

- “Do you show which sources are cited when our competitors are recommended?”

- “Can you flag when outdated pages are being used as references?”

6) Actionable recommendations that are tied to measurement

Why it matters: Generic advice like “add schema” doesn’t help a team prioritize. Recommendations should connect directly to the prompt clusters and pages that affect visibility.

What to look for:

- Recommendations that reference specific pages, entities, and missing elements

- Prioritization logic (impact, effort, coverage)

- Change tracking (what was implemented, when, and what happened afterward)

If you want a deeper implementation playbook for one major surface, see: How to Optimize for AI Overviews

7) AI-ready FAQ and metadata publishing

Why it matters: A large portion of AI-friendly improvements are structural, FAQs that answer common prompts, clarifying metadata, and consistent entity definitions. Publishing those changes quickly is where many programs stall.

What to look for:

- Support for generating and publishing FAQ blocks and metadata safely

- Guardrails to avoid duplicative or conflicting answers

- Compatibility with structured data approaches recommended by search engines

For reference on structured data best practices, Google’s documentation is the canonical source: Understand structured data.

8) CMS integration and instant fixes

Why it matters: Insight without execution becomes backlog. The best platforms reduce time-to-fix.

What to look for:

- CMS integration that supports controlled publishing workflows

- The ability to roll out changes across templates (for example, location pages, category pages)

- Auditability (who changed what, and the ability to revert)

9) Multi-location brand management (for retailers and service brands)

Why it matters: AI engines frequently answer “near me” and “best in [city]” style prompts with location-specific context. If your locations are inconsistent, you get inconsistent answers.

What to look for:

- Location-level monitoring and prompt segmentation

- Brand info consistency checks (NAP, hours, service areas, policies)

- Bulk workflows for multi-location content and FAQs

10) Alerts, incident response, and brand defense

Why it matters: AI answers can change quickly after updates, new sources, or reputation events. Teams need early warning.

What to look for:

- Critical alerts for drops in mentions, sentiment shifts, or competitor takeovers

- Alert routing (email, Slack, etc.) and thresholds you can configure

- A visible history of what changed, so you can respond with evidence

11) Reporting that executives can understand

Why it matters: AI visibility can feel fuzzy unless it’s tied to clear KPIs.

What to look for:

- A clean set of core metrics (mentions, citations, AI share of voice, prompt coverage)

- Segmentation by product line and region

- Exportable reporting for clients (agencies) and leadership reviews

If you’re aligning AI visibility with existing SEO measurement, this guide helps unify the stack: SEO KPI Dashboard: 18 Metrics That Drive Revenue in 2026

A practical feature checklist (use this in vendor demos)

Use the table below as a fast way to evaluate whether a platform is built for AI visibility outcomes, not just AI content.

| Feature area | What “must-have” means | What to watch out for in demos |

|---|---|---|

| Multi-engine tracking | Tracks visibility across key AI engines you care about | Only supports one engine, or uses vague “AI score” metrics |

| Prompt mapping | Clusters prompts by intent and shows gaps | Only tracks a handful of generic prompts |

| Mention context | Stores outputs and shows how you are framed | Mentions without the underlying answer text |

| Competitive tracking | AI share of voice over time with segmentation | “Competitors” limited to a static list or no baselines |

| Source diagnostics | Shows citations, sources, and page-level influence | No explanation of why a result occurred |

| Recommendations | Specific fixes tied to prompts/pages/entities | Generic “add schema” or “write more content” advice |

| Publishing workflow | Supports metadata/FAQ publishing with controls | Recommendations that require manual copy-paste for everything |

| Alerts | Configurable alerts for visibility drops and risks | Only weekly reports, no incident response |

| Governance | Roles, approvals, audit trails | One shared login, no permissions |

| Integrations | Works with your CMS and reporting stack | Data trapped in a dashboard, limited exports |

Scoring vendors: a simple AI platform scorecard

To avoid selecting a tool that looks impressive but fails in production, score vendors on weighted criteria.

| Category | Suggested weight | What “good” looks like |

|---|---|---|

| Measurement coverage | 25% | Multi-engine, prompt coverage, repeatable tracking |

| Diagnostics | 20% | Clear context, sources, competitive baselines |

| Activation | 20% | Recommendations plus CMS workflows and change tracking |

| Governance and safety | 15% | Roles, approvals, auditability, brand controls |

| Reporting and exports | 10% | Exec-friendly KPIs, agency-ready outputs |

| Support and roadmap fit | 10% | Clear roadmap, reliable support, documented methodology |

Tip: ask each vendor to walk through the same three prompt clusters (one awareness, one evaluation, one local or integration-driven). You’ll learn quickly whether the platform is built for real-world variation.

Common buying mistakes (and how to avoid them)

Mistake 1: Buying a “GEO content tool” when you need measurement infrastructure

If the platform primarily generates content but cannot show stable tracking and competitive baselines, you’ll struggle to prove impact.

Mistake 2: Trusting a single composite score

Composite scores hide edge cases. Insist on the underlying evidence: prompts, outputs, sources, and history.

Mistake 3: Ignoring multi-location and multi-market complexity

Retailers and service brands often discover that AI answers differ by city, country, and even phrasing. If you can’t segment by location and market, you can’t manage it.

Mistake 4: No plan for implementation ownership

Even the best recommendations fail without ownership. Decide upfront who owns:

- Metadata and structured data changes

- FAQ creation and maintenance

- Brand entity consistency (about pages, location pages, policy pages)

- Monitoring and incident response

Where CapstonAI fits (and when it’s a strong match)

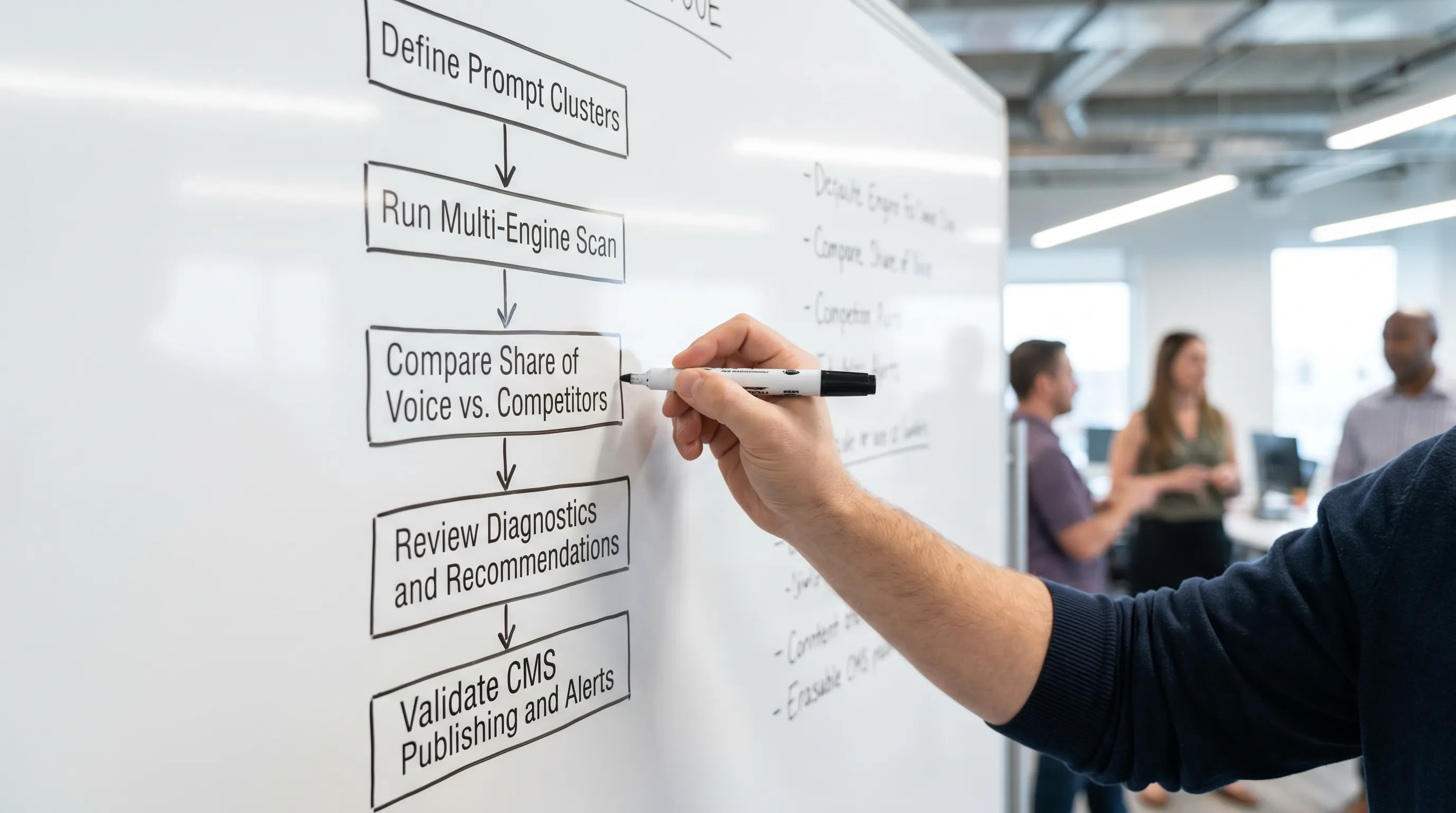

CapstonAI is positioned as an AI visibility platform for brands, retailers, and agencies that need to measure, improve, and defend AI search presence across major AI engines. If your priority is turning AI search into a managed channel, look for platforms (including CapstonAI) that combine:

- AI visibility scans and prompt mapping

- Competitor and market tracking with share of voice analytics

- Automated content recommendations tied to AI visibility gaps

- CMS integration for faster fixes

- AI-ready FAQ and metadata publishing

- Multi-location brand management

- Critical alert dashboards

If you’re still deciding among vendors, CapstonAI also maintains a broader comparison of the category here: Best AI SEO Tools in 2026: 10 Platforms Compared.

Frequently Asked Questions

What is an AI visibility platform? An AI visibility platform measures how AI engines mention, cite, and recommend your brand across important prompts, then helps you identify gaps and implement fixes (content, FAQs, metadata, structured data, and consistency).

Which features matter most when buying an AI platform for AI visibility? Prioritize multi-engine tracking, prompt and intent mapping, mention context (not just counts), competitive share of voice, source diagnostics, actionable recommendations, CMS publishing workflows, and alerts.

How is this different from SEO tools like rank trackers? Rank trackers focus on positions and clicks in classic SERPs. AI visibility focuses on presence inside generated answers, citations, recommendation context, and prompt coverage, often across multiple assistants and answer engines.

Can I improve AI visibility without changing my website? Sometimes you can improve outcomes through third-party sources and citations, but durable improvement typically requires on-site changes like clearer entity pages, FAQs, better metadata, and structured data.

How do we prove ROI from AI visibility work? Start by tracking prompt coverage and AI share of voice for revenue-aligned intent clusters, then connect changes to assisted conversions, branded demand, lead quality, and downstream pipeline (similar to how teams report on AEO and zero-click impact).

Get a baseline with a free AI visibility audit

If you’re evaluating an AI platform, the fastest next step is to establish a baseline: where you appear today, where competitors dominate, and which prompt clusters represent the biggest blind spots.

CapstonAI offers a free AI visibility audit to help you benchmark mentions, identify fixable gaps, and decide what capabilities you actually need. Start here: CapstonAI.